A Network of Inauthentic Accounts Promoting Iranian Military Strength Accumulated 25 Million Views in Days:

A Case Study in Why Coordination Detection Matters

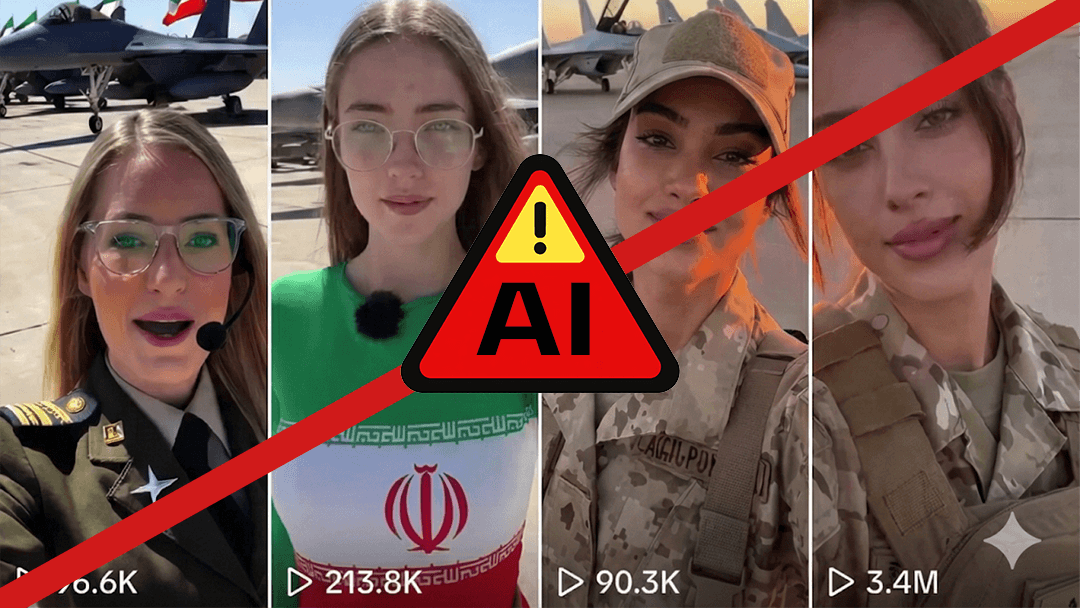

Alethea identified a network of TikTok accounts disseminating AI-generated videos of Iranian female fighter pilots, designed to project military strength, co-opt dissident hashtags, and reshape global perceptions of the Islamic Republic. The campaign accumulated over 25 million views in a matter of days, leveraging synthetic media, coordinated posting behavior, and artificial amplification to flood geopolitically sensitive hashtag spaces during an active period of U.S.-Iran military tensions.

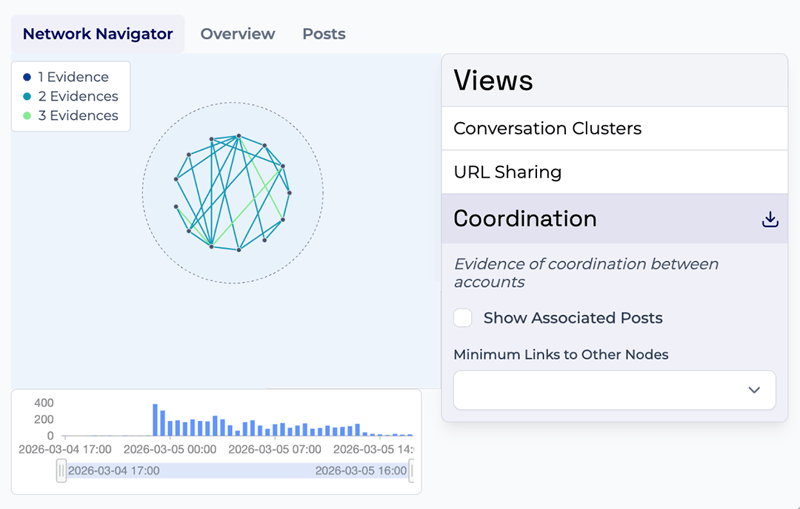

The network exhibited hallmarks of coordinated inauthentic behavior, including batch-created accounts, identical captions, anomalous engagement patterns, and cross-platform spread, the exact behavioral signatures that Alethea's AI-powered platform, Artemis, is purpose-built to detect.

For organizations monitoring narrative risk in the defense, energy, Middle East policy, or national security spaces, this case study illustrates how AI-generated content can rapidly distort public discourse, and why coordination detection and synthetic media analysis — often a blind spot of traditional social listening tools — are essential to maintaining situational awareness in high-stakes geopolitical information environments.

As similar campaigns are identified, speed of response matters as much as speed of detection. Alethea’s recently launched agentic AI-powered Mitigation Suite closes the gap between intelligence and action, generating narrative refutations, takedown requests, and coordinated response materials in minutes, so communications, security, and legal teams can move at the speed of the threat.

Coordination Signals Beneath the Surface

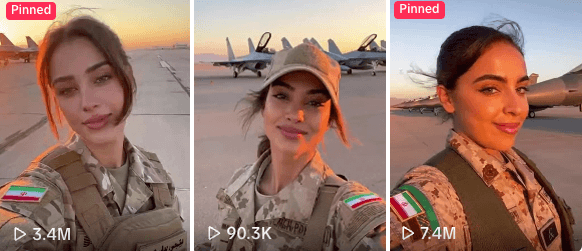

In late February 2026, a cluster of TikTok accounts started sharing AI-generated videos featuring women in military flight suits and IRGC uniforms, posing on airfields in front of fighter jets bearing Iranian flags. In the videos, the AI-generated women say "Habibi, come to Iran" — a play on the popular “Habibi come to” TikTok trend, in which creators invite viewers to visit a destination while showcasing luxury or cultural experiences there.

What appeared on the surface to be lighthearted viral videos celebrating Iranian women was, beneath it, an apparent information operation designed to influence public opinion. The network exhibited behavioral indicators consistent with coordinated inauthentic behavior, including signals Artemis' coordination models are designed to detect.

-

Similar account creation dates: Most of which were created around February 2026, a hallmark of batch-created operations designed for rapid deployment.

-

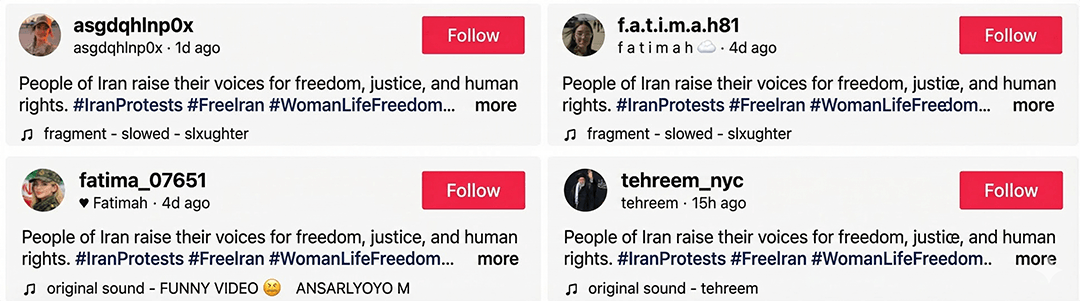

Identical captions: All accounts posted videos with the identical caption: "People of Iran raise their voices for freedom, justice, and human rights" — language designed to co-opt authentic dissident messaging

-

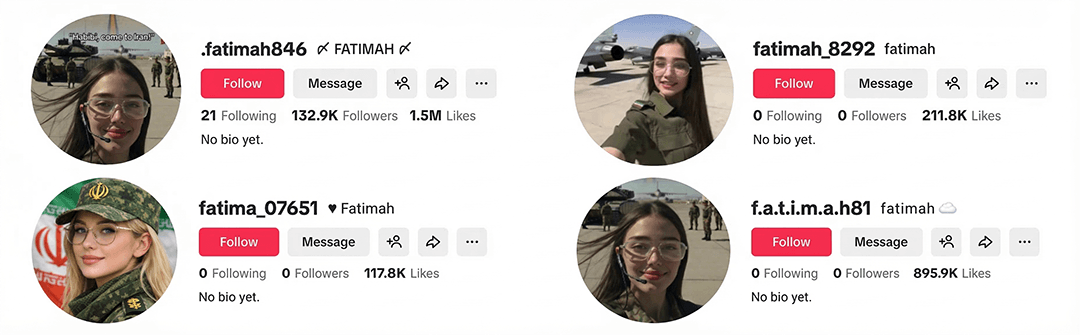

Handle pattern overlap: Account names followed distinct patterns, such as variations on the name "Fatimah" (fatimah_8292, fatimah15878, f.a.t.i.m.a.h81, fatima_07651, .fatimah846) or randomized alphanumeric strings (batliuiywcb, gunsohxexp8, sowhuwzrd96), which is consistent with automated account generation.

-

Single-purpose accounts: The accounts had not shared any content aside from the AI-generated pilot videos or other pro-regime material, suggesting they were built for one purpose only.

-

Synthetic profile images: Profile pictures across the network consisted of the Iranian flag, stock photos of women, or images of killed Supreme Leader Khamenei, none showing signs of belonging to a real individual.

-

Anomalous engagement patterns: Some accounts amassed hundreds of thousands of followers within days of creation. Others had zero followers but millions of likes on their videos. This kind of engagement mismatch is an indicator of artificial amplification.

-

Comment flooding: The videos were saturated with near-identical spam-like comments such as "I love Iran," "Habibi," and "Iran strong," suggesting the use of bot networks or coordinated amplification tools to manufacture the appearance of organic enthusiasm.

Individually, any one of these signals might be dismissed as a coincidence. However, taken together, they form a clear pattern of coordinated inauthentic behavior, exactly the kind of patterns Artemis detects.

Co-Opting Dissent to Serve the State

What made this campaign sophisticated was its narrative design. The content blended pro-Iranian military imagery with hashtags traditionally associated with Iran's protest movements that erupted in 2022, including #WomanLifeFreedom and #FreeIran.

This content serves a threefold purpose: to all audiences, a demonstration of the strength of the Islamic Republic and its military; domestically, they counter the regime’s documented repression of women by depicting empowered female pilots; and internationally, it reframes the global perception from one shaped by images of morality police and protest crackdowns to one of female empowerment in service of the state.

By flooding hashtag spaces originally created by Iranian dissidents with pro-regime content, the campaign either aimed to co-opt authentic opposition language, dilute genuine dissident voices, project a modernized image of the Islamic Republic, or all three simultaneously.

This is precisely the kind of campaign that traditional text-based social listening tools would be likely to misread. A platform scanning for keywords and sentiment would categorize captions about "freedom, justice, and human rights," hashtags like #WomanLifeFreedom, and imagery of empowered women as positive, pro-feminist content aligned with authentic activist movements. This is why detecting the true nature of such operations engineered to evade conventional monitoring requires the combination of AI-powered coordination detection, synthetic media identification, and expert-led human analysis.

From TikTok to the Broader Information Ecosystem

The campaign did not remain contained on TikTok. These videos then spread to X, YouTube and Meta’s platforms, potentially reaching larger and more geographically diverse audiences, generating organic spread, sustaining cross-platform persistence, extending the content’s lifespan, and reducing the reliance on one social network.

This pattern mirrors the laundering tactics Alethea has documented in previous investigations tracking foreign influence operations, where content is seeded on one platform and then pushed into adjacent communities to create the illusion of widespread organic engagement.

Definitive attribution remains an ongoing area of analysis as the accounts lack sufficient identifying metadata. However, the timing of this campaign, which began spreading late February 2026 just as U.S. military operations in Iran took place, indicates that the videos may be part of a broader Iranian information warfare posture designed to project regime strength and shape global narratives during a period of international conflict.

The speed at which the network accumulated over 25 million views within days underscores a growing vulnerability: AI-generated short-form video, paired with coordinated amplification, can achieve massive reach during fast-moving crises when public attention and emotional engagement are at their highest.

Who Is at Risk?

Organizations operating in geopolitically sensitive sectors are especially exposed to campaigns like this one:

Defense & Aerospace: Coordinated pro-regime military imagery can distort public narratives around active conflicts, complicate strategic communications, and undermine messaging from allied governments and defense partners.

Critical Infrastructure & Logistics: Transportation, energy, tech, and supply chain operators are frequent targets during periods of geopolitical tension. Coordinated narrative campaigns tied to the Iran conflict can amplify calls for disruption, fuel boycotts, and shift public sentiment in ways that create operational risk.

Cybersecurity: Such campaigns are increasingly relevant to CISOs and digital risk teams tasked with the latest adversarial behaviors, emerging tactics, and shifting digital environments.

NGOs & Civil Society: Organizations supporting Iranian dissidents or human rights causes face a direct threat when pro-regime operations flood their hashtag spaces, diluting authentic voices and complicating advocacy efforts.

In short: if your organization's mission, reputation, or stakeholders intersect with the Iran conflict, campaigns like this one are designed to shape the information environment you operate in. Artemis provides the cross-platform visibility, coordination detection, and AI-enabled analysis organizations need to identify and mitigate these threats before they reach critical mass.

.png)